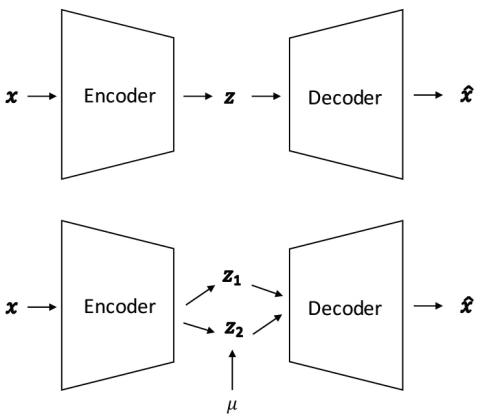

We study the problem of cross-lingual voice conversion in non-parallel speech corpora and one-shot learning setting. Most prior work require either parallel speech corpora or enough amount of training data from a target speaker. However, we convert an arbitrary sentences of an arbitrary source speaker to target speaker's given only one target speaker training utterance. To achieve this, we formulate the problem as learning disentangled speaker-specific and context-specific representations and follow the idea of [1] which uses Factorized Hierarchical Variational Autoencoder (FHVAE). After training FHVAE on multi-speaker training data, given arbitrary source and target speakers' utterance, we estimate those latent representations and then reconstruct the desired utterance of converted voice to that of target speaker. We investigate the effectiveness of the approach by conducting voice conversion experiments with varying size of training utterances and it was able to achieve reasonable performance with even just one training utterance. We also examine the speech representation and show that World vocoder outperforms Short-time Fourier Transform (STFT) used in [1]. Finally, in the subjective tests, for one language and cross-lingual voice conversion, our approach achieved significantly better or comparable results compared to VAE-STFT and GMM baselines in speech quality and similarity.

Conclusions

We proposed to exploit FHVAE model for challenging nonparallel and cross-lingual voice conversion, even with very small number of training utterances such as only one target speaker’s utterance. We investigate the importance of speech representations and found that World vocoder outperformed STFT which was used in [1] in experimental evaluation, both speech quality and similarity. We also examined the effect of the size of training utterances from target speaker for VC, and our approach outperformed baseline with less than 10 sentences, and achieve reasonable performance even with only one training utterance. In the subjective tests, our approach achieved significantly better results than both VAE-STFT and GMM in speech quality, and outperformed VAE-STFT and comparable to GMM in speech similarity. As future work, we are interested in and working on joint end-to-end learning with Wavenet or Wavenet-vocoder, and also building models trained on multi-language corpora with or without explicit modeling of different languages such as providing language coding.